Once again, Huang essentially declared a blowout, forecasting a staggering $1 trillion in orders for Nvidia’s most sophisticated AI chips through 2027, driven by the explosion of AI infrastructure now being built around the world.

In fact, every major move in AI—from chatbots and agents to applications in the workplace, schools, and the military—runs on Nvidia hardware, software, and systems. Nvidia has also invested billions to support the AI ecosystem, partnering with both OpenAI and Anthropic, as well as funding data center companies and AI startups.

So why isn’t Huang—and Nvidia as a whole—a target of the AI backlash?

Still, Nvidia made it clear at GTC that it is positioning itself not just as a chipmaker but as the provider of entire AI computing systems powering the new “inference” phase of AI. (Inference is about powering AI outputs, not just training, and it will require an enormous new round of infrastructure investment.) That ambition goes beyond Nvidia’s traditional “picks and shovels” role. These days, Nvidia is increasingly trying to control the entire swath of systems, software and platforms that power the AI economy.

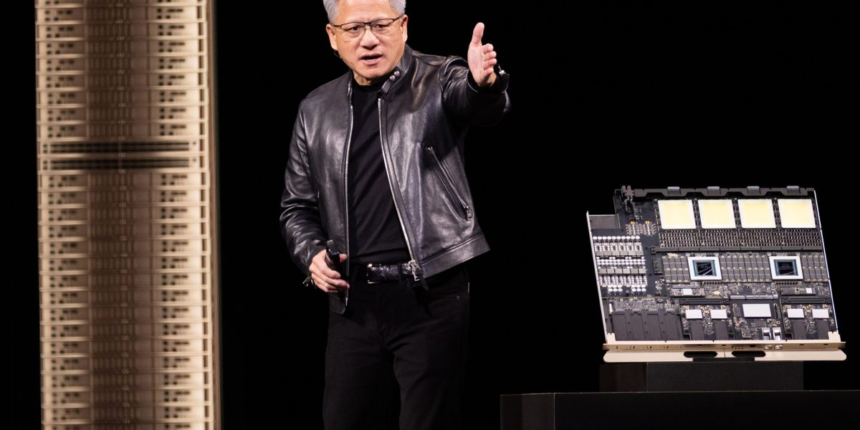

The centerpiece of Huang’s keynote was the launch of the company’s Vera Rubin platform, which combines multiple chips and system components designed to run large AI models and “agentic AI” systems. The platform includes seven new chips and several rack-scale systems intended to power extremely large AI clusters containing hundreds of thousands of GPUs.

Nvidia also introduced NemoClaw, an open-source platform for building enterprise AI agents, allowing companies to create agents, connect them to corporate data, and deploy them on Nvidia hardware.

At the same time, Nvidia is continuing to invest aggressively across the AI ecosystem. The company has poured billions into dozens of AI startups over the past year. Most recently it invested $2 billion in AI cloud company Nebius and is backing former OpenAI CTO Mira Murati’s new venture, Thinking Machines, with plans for more than 1 gigawatt of Nvidia-powered compute capacity.

The company is also continuing its push into autonomous vehicles, where Nvidia chips and software platforms are increasingly being adopted by carmakers building self-driving systems.

Finally, Huang used GTC to promote what he called AI’s “five-layer cake.” The AI economy, he argued, depends on five layers—energy, chips, infrastructure, models, and applications—all of which must scale together to support the massive buildout now underway. Nvidia, not coincidentally, sits squarely in the middle of that stack—connecting most of those layers together.

For now, Nvidia still benefits from the traditional insulation of a picks-and-shovels supplier. But as the sprawling AI data centers rising across the country fill with Nvidia hardware—and as the company pushes deeper into the systems powering them—the company may find itself far more exposed to the debate over AI’s consequences.