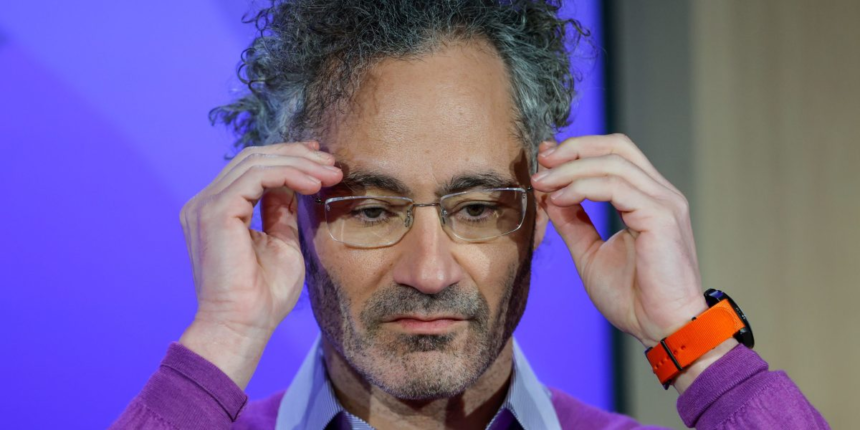

Karp was commenting on a topic that has taken the AI world by storm: In what capacity should AI companies collaborate with the government? A closer look explains why a dustup between the Pentagon and two totally separate companies (Anthropic and OpenAI) has prompted Karp’s displeasure.

At which Karp noted: “If Silicon Valley believes we are going to take away everyone’s white-collar job—meaning primarily Democratic-shaped people that you might grow up with, highly educated people who went to elite schools or went to schools that are almost elite for one party—and you’re going to sue the military. If you don’t think that’s going to lead to nationalization of our technology, you’re retarded.”

Whoa. So what’s bothering Mr. Karp?

While Karp could have chosen less offensive language to make his point, he was touching on a raw nerve—one that is acutely personal for Palantir. “You cannot have technologies that simultaneously take away everyone’s job,” he said, and then be perceived as screwing the military. That tension isn’t abstract for Palantir. It could very well be a live operational crisis.

“There’s a difference between U.S. military and surveillance,” he said at the summit. “Despite what everyone thinks, Palantir is the anti-surveillance company,” he said, pushing back on claims that the company named after an all-seeing surveillance device from Lord of the Rings is fundamentally about surveillance. Every technical expert knows this to be the case, but the proverbial “person online” simply has the wrong idea, Karp argued, “so I end up in every conversation that I don’t want to be in.”

For Palantir, that sequence of events is not an abstraction—it is a direct operational threat. Palantir’s flagship AI Platform (AIP) relies on plugging best-in-class frontier models into its defense and intelligence workflows. Claude Opus is among the most capable of those models, prized for its reasoning depth and reliability in high-stakes environments. If Anthropic is blacklisted as a military supply-chain risk—or if its terms of service effectively bar it from the classified settings where Palantir operates—Palantir would lose access to one of its most powerful AI engines. It would be forced to retool its platform around alternative models mid-contract, a costly and reputationally damaging disruption for a company whose entire brand promise is mission-critical reliability.

“Again, there’s a lot of subtlety here behind the curtain,” Karp acknowledged. “I’ve been heavily involved in that subtlety—what can be deployed, where it can be deployed.”

The stakes, Karp argued, go well beyond any single Pentagon contract or any single company’s policy decision. “The danger for our industry,” he warned, “is that you get a famous horseshoe effect where there’s only one thing people agree on—and that’s that this is not paying the bills, and people in our industry should be nationalized.”

That populist convergence—where left and right alike turn on tech—becomes inevitable, in Karp’s telling, if AI companies strip white-collar workers of their livelihoods while simultaneously refusing to serve the military. Again, he was pointed about who those workers are: “Primarily Democratic-shaped people that you might grow up with—highly educated people who went to elite schools, or went to schools that are almost elite, for one party.”

In Karp’s view, the government would not allow AI companies to amass the power they already hold and still operate in a self-regulatory, nongovernmental oversight capacity—let alone dictate terms of use back to the government itself. “This is where that path is going,” he said simply. The only way for companies like Palantir to retain their position, their contracts, and their access to the frontier AI models that power their platforms is to play by the government’s rules when called upon. For Palantir, losing that seat at the table doesn’t just mean bad optics. It means losing the technological inputs that make its core product work.